Triangulation

Wednesday, January 14, 2009 | Permalink

When you look at a highly tessellated model it's generally understood that it will be vertex processing heavy. Not quite as widely understood is the fact that increasing polygon count also adds to the fragment shading cost, even if the number of pixels covered on the screen remains the same. This is because fragments are processed in quads. So whenever a polygon edge cuts through a 2x2 pixel area, that quad will be processed twice, once for both of the polygons covering it. If several polygons cut through it, it may be processed multiple times. If the fragment shader is complex, it could easily become the bottleneck instead of the vertex shader. The rasterizer may also not be able to rasterize very thin triangles very efficiently. Since only pixels that have their pixel centers covered (or any of the sample locations in case of multisampling) are shaded the quads that need processing may not be adjacent. This will in general cause the rasterizer to require additional cycles. Some rasterizers may also rasterize at fixed patterns, for instance an 4x4 square for a 16 pipe card, which further reduces the performance of thin triangles. In addition you also get overhead because of less optimal memory accesses than if everything would be fully covered and written to at once. Adding multisampling into the mix further adds to the cost of polygon edges.

The other day I was looking at a particularly problematic scene. I noticed that a rounded object in the scene was triangulated pretty much as a fan, which created many long and thin triangles, which was hardly optimal for rasterization. While this wasn't the main problem of the scene it made me think of how bad such a topology could be. So I created a small test case to measure the performance of three different layouts of a circle. I used a non-trivial (but not extreme) fragment shader.

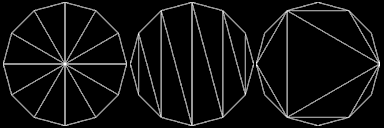

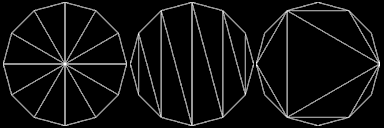

The most intuitive way to triangulate a circle would be to create a fan from the center. It's also a very bad way to do it. Another less intuitive but also very bad way to do it is to create a triangle strip. A good way to triangulate it is to start off with an equilateral triangle in the center and then recursively add new triangles along the edge. I don't know if this scheme has a particular name, but I call it "max area" here as it's a greedy algorithm that in every step adds the triangle that would grab the largest possible area out of the remaining parts on the circle. Intuitively I'd consider this close to optimal in general, but I'm sure there are examples where you could beat such a strategy with another division scheme. In any case, the three contenders look like this:

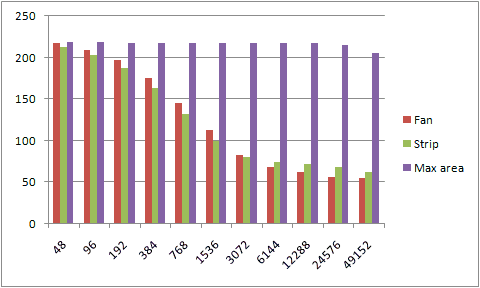

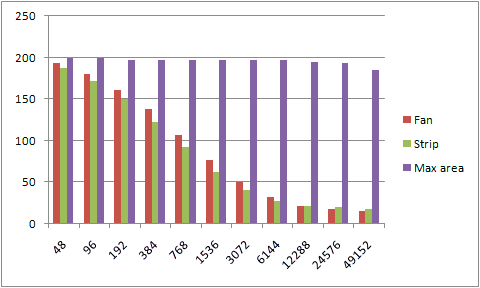

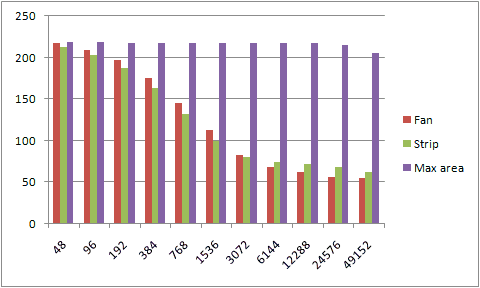

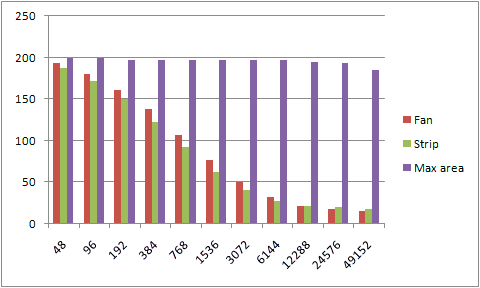

And their performance look like this. The number along the x-axis is the vertex count around the circle and the y-axis is frames per second.

Adding multisampling into the mix further adds to the burden with the first two methods, while the max area division is still mostly unaffected by the added polygons all the way across the chart.

[

35 comments |

Last comment by James N (2024-02-08 23:51:45) ]