More pages: 1 ...

11 12 13 14 15 16 17

18 19 20 21 22 ...

31 ...

41 ...

48

Happy Anniversary AMD!

Friday, May 1, 2009 | Permalink

Today AMD turns 40. And what's a 40 year old without a bit of a crisis? Congratulations and good luck in the future!

I worked for AMD for about a year. I came originally from ATI but became part of AMD due to the merger in 2006. Continued with the same kind of job under AMD until the fall of 2007, after which I moved home to Sweden again and joined Avalanche Studios. Greetings to all my old friends that are still at AMD! Keep up the good work!

[

0 comments ]

Today's whine

Wednesday, April 29, 2009 | Permalink

As I touched on briefly in my piracy

post on piracy, we live in a globalized world and regionalizing digital products makes absolutely no sense in this time and age. This is not something that the average broadcast corporation understands, but some do. Or did. It's with great disappointment I see that Comedy Central has now implemented geofiltering, the perhaps most ironic and moronic kind of technology ever invented, so now my favorite show The Daily Show with John Stewart is no longer "available" in my "area". What they are hoping to achieve with this is beyond my comprehension. What the result is going to be is obvious though, their shows will get a smaller audience, and those who care enough will pirate it. And the pirated versions are of course stripped from all the commercials, the bread and butter of broadcasting corporations.

Oh well, in another 10-15 years the average CEO will be of my generation. By then I hope this kind of nonsense finally comes to an end.

[

8 comments |

Last comment by sqrt[-1] (2009-05-08 12:34:37) ]

Hmmm ...

Tuesday, April 28, 2009 | Permalink

Some left over debug code in Nvidia's webshop?

Or perhaps a warning regarding how truthful the information on the site is?

Screenshot is from yesterday night. Did not happen when I tried today.

[

1 comments |

Last comment by drp (2009-04-30 02:39:41) ]

Shader programming tips #4

Monday, April 27, 2009 | Permalink

The depth buffer is increasingly being used for more than just hidden surface removal. One of the more interesting uses is to find the position of already rendered geometry, for instance in deferred rendering, but also in plain old forward rendering. The easiest way to accomplish this is something like this:

float4 pos = float4(In.Position.xy, sampled_depth, 1.0);

float4 cpos = mul(pos, inverse_view_proj_scale_bias);

float3 world_pos = cpos.xyz / cpos.w;

The inverse_view_proj_scale matrix is the inverse of the view_proj matrix multiplied with a scale_bias matrix that brings the In.Position.xy from [0..w, 0..h] into [-1..1, -1..1] range.

The same technique can of course also be used to compute the view position instead of the world position. In many cases you're only interested in the view Z coordinate though, for instance for fading soft particles, fog distance computations, depth of field etc. While you could execute the above code and just use the Z coordinate this is more work than necessary in most cases. Unless you have a non-standard projection matrix you can do this in just two scalar instructions:

float view_z = 1.0 / (sampled_depth * ZParams.x + ZParams.y);

ZParams is a float2 constant you pass from the application containing the following values:

ZParams.x = 1.0 / far - 1.0 / near;

ZParams.y = 1.0 / near;

If you're using a reversed projection matrix with Z=0 at far plane and Z=1 at near plane you can just swap near and far in the above computation.

[

9 comments |

Last comment by Greg (2009-05-01 19:33:02) ]

A couple of notes about Z

Tuesday, April 21, 2009 | Permalink

It is often said that Z is non-linear, whereas W is linear. This gives a W-buffer a uniformly distributed resolution across the view frustum, whereas a Z-buffer has better precision close up and poor precision in the distance. Given that objects don't normally get thicker just because they are farther away a W-buffer generally has fewer artifacts on the same number of bits than a Z-buffer. In the past some hardware has supported a W-buffer, but these days they are considered deprecated and hardware don't implement it anymore. Why, aren't they better? Not really. Here's why:

While W is linear in view space it's not linear in screen space. Z, which is non-linear in view space, is on the other hand linear in screen space. This fact can be observed by a simple shader in DX10:

float dx = ddx(In.position.z);

float dy = ddy(In.position.z);

return 1000.0 * float4(abs(dx), abs(dy), 0, 0);

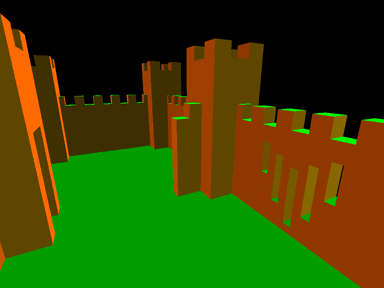

Here In.position is SV_Position. The result looks something like this:

Note how all surfaces appear single colored. The difference in Z pixel-to-pixel is the same across any given primitive. This matters a lot to hardware. One reason is that interpolating Z is cheaper than interpolating W. Z does not have to be perspective corrected. With cheaper units in hardware you can reject a larger number of pixels per cycle with the same transistor budget. This of course matters a lot for pre-Z passes and shadow maps. With modern hardware linearity in screen space also turned out to be a very useful property for Z optimizations. Given that the gradient is constant across the primitive it's also relatively easy to compute the exact depth range within a tile for Hi-Z culling. It also means techniques such as Z-compression are possible. With a constant Z delta in X and Y you don't need to store a lot of information to be able to fully recover all Z values in a tile, provided that the primitive covered the entire tile.

These days the depth buffer is increasingly being used for other purposes than just hidden surface removal. Being linear in screen space turns out to be a very desirable property for post-processing. Assume for instance that you want to do edge detection on the depth buffer, perhaps for antialiasing by blurring edges. This is easily done by comparing a pixel's depth with its neighbors' depths. With Z values you have constant pixel-to-pixel deltas, except for across edges of course. This is easy to detect by comparing the delta to the left and to the right, and if they don't match (with some epsilon) you crossed an edge. And then of course the same with up-down and diagonally as well. This way you can also reject pixels that don't belong to the same surface if you implement say a blur filter but don't want to blur across edges, for instance for smoothing out artifacts in screen space effects, such as SSAO with relatively sparse sampling.

What about the precision in view space when doing hidden surface removal then, which is still is the main use of a depth buffer? You can regain most of the lost precision compared to W-buffering by switching to a floating point depth buffer. This way you get two types of non-linearities that to a large extent cancel each other out, that from Z and that from a floating point representation. For this to work you have to flip the depth buffer so that the far plane is 0.0 and the near plane 1.0, which is something that's recommended even if you're using a fixed point buffer since it also improves the precision on the math during transformation. You also have to switch the depth test from LESS to GREATER. If you're relying on a library function to compute your projection matrix, for instance D3DXMatrixPerspectiveFovLH(), the easiest way to accomplish this is to just swap the near and far parameters.

Z ya!

[

12 comments |

Last comment by crazii (2016-06-12 05:38:21) ]

Piracy

Friday, April 17, 2009 | Permalink

As many of you may have read in the news, the verdict in the Pirate Bay trial was delivered today, sentencing the four defendants to jail and 30 million SEK (~$3.5M) in damages. This will undoubtedly generate quite a lot of fuss and I wanted to share my thoughts on piracy in general. Given that this is a quite controversial issue I want to begin by pointing out that this is entirely my own personal opinions and does not in any shape or form represent the opinions of my employer, former or future employers, my friends, relatives, my penguin or my ceiling cat. This represents my view as of 2009-04-17, and I reserve the right to change my opinions at any point, as I’ve done in the past. The reason I say that is because this issue is fundamentally hard, very fussy and with many shades of gray in palette, legally and especially morally. Some people see it as black or white though. You can generally categorize those in two groups; the ignorant and those with an agenda. I say the ignorant because I find it hard to believe anyone who considered the arguments from both sides would end up at either extreme. Those with an agenda are of course the corporate representatives and the anti-copyright activists.

While reiterating that I’m representing myself here only, for the sake of the argument, I do work in the game industry, and my income is dependent on people paying for games. That doesn’t automatically land me in the property rights corner. In fact, talking to my colleagues I find that the opinions vary, but I hear more piracy friendly opinions than the opposite. I’m probably leaning somewhat more towards the property right than many of my friends though.

Let me elaborate why I’m rejecting the extremes. I’ll begin with the “piracy is theft” argument. The people trying to push this idea are making a fundamental mistake. Piracy is not theft. Morally questionably? Perhaps. But it’s not theft. But reiterating this argument over and over they are feeding the trolls in the other camp and giving them a reason follow the tangent all the way into the conclusion that property rights don’t exist. The difference between piracy and theft is that when you steel something the owner loses what you stole from him. When you copy something, the owner doesn’t lose what you copied. So that makes it OK, right? Not necessarily. Just because it’s not theft doesn’t make it right. Rape, for instance, is not theft either, but of course morally and legally indefensible. Piracy is of course not rape either, but it is copyright infringement. Copying someone else’s work without permission is indeed questionable. Anti-copyright activists argue it’s perfectly fine, and it’s of course hard to see the harm if you don’t personally know the author. It’s clear why the victim of theft suffers, and it pretty much automatically invokes a sense of moral wrong in all normal people, in a way that copyright infringement does not for most people. Arguing for intellectual property is usually on rational grounds, rather than based on principles or a moral sense. But let me give you an example where copying someone else’s work without permission should give you sense it’s morally wrong. Let’s say a school class is handed some homework. Lisa spends all evening researching and writing down all answers to the questions. Steve on the other hand spends the night in front of the TV. Next morning he secretly borrows Lisa’s notes and copies the answers. Lisa still got her sheet of answers and will still get a good grade, so that makes it OK, right? No, of course not. The answers belong to Lisa. She spent the work assembling them. They are her intellectual property.

So why do we feel the moral indignation in this case? Because we imagine Lisa being an innocent schoolchild, and Steve is taking advantage of her work. It’s harder to feel the same sympathy for an artist making millions. Of course, the vast majority of artists don’t make millions, but that’s less frequently brought up in these kinds of discussions. Let’s ignore that for now. I’m sure pretty much everyone would agree however that it’s not right to copy Lisa’s work without her permission. However, if Lisa herself decides to share her work with her classmate it’s a totally different matter. Anti-copyright activist basically side with Steve in this matter though. Just because it’s possible to copy doesn’t make it right to do so.

Now, while defending the author’s right to his or her intellectual property, the question is how companies and society as a whole should react to this phenomenon. All over the world copyright laws are tightened, property rights are extended to ridiculous times, kids are sued over downloading a song, personal privacy is violated and corporations are taking over the police’s role in criminal investigation. This is pretty backwards in all possible ways. Sweden recently implemented new tougher laws that forces ISPs to provide the personal information for the individual a certain IP address belongs to. The immediate response to this has been that internet traffic has dropped significantly and legal purchases of things like music, games and movies have gone up. This is probably the effect law makers were hoping for. I would agree that it’s a positive development if this is happening, but this is obviously a temporary effect. When the first anxiety of the new laws have settled and people realize there’s no way they can sue an entire generation of youth and their parents and schools things will of course go back to normal again. But the more important point is that this change in behavior is not happening for the right reasons. People are changing their behavior because of fear, not because they found the legal alternative more appealing. This is not the society we want. And it’s all pointless anyway. It should be pretty clear that there’s no technical or legal way to win this battle. Piracy is here to stay (not that piracy is new either).

I do defend copyright law and the concept of intellectual property. The question is, how much effort should society put into preventing piracy? While the laws in this area were probably originally written back in Gutenberg’s days, so some updates and clarifications on how it applies to modern content is necessary, I don’t think we need to change the basic principles. If anything, the more logical course of direction would be towards greater personal freedom. Things move faster these days, so if anything, things should get into the public domain quicker, patents last shorter, and greater liberties with regards to “fair use” should apply. When more things are technically possible, and public opinion generally favors greater liberties, it only makes sense that laws should follow in that direction.

Another thing to consider is what effect piracy has on society as a whole. I won’t lie, I have listened music, seen movies, used software and played games that were not paid for and properly licensed. As I grew older, got a stable income, it’s something that’s far less frequently occurring than in the past though. When I got my first real job I decided I could now afford to buy my games. So for the last 6 years or so I haven’t pirated any games for instance. This is something I think I share with a lot of people. Instead of fussing around with cracks that don’t always work and lock you out from being able to patch the game and not knowing if this stuff from shady people comes with any bonus viruses or trojans you simply decide that buying the game is a more attractive option. If you have a job, it’s not much money, and it just works. But if you’re a student who can barely afford your food and housing, you may see things differently. What do we gain on preventing this student from pirating a game? Most likely he wouldn’t buy the game anyway in most cases if that was the only option. While games are non-essential one could argue it’s not much of a loss either that he can’t play it. But what about other software? The first software I ever pirated was a compiler. A stack of floppy disks, RAR for DOS, and several rounds back and forth between home and school over a couple of days and the compiler was up and running. Imagine if I had not done that. Imagine I would not have spent endless hours coding at home on the pirated compiler. Would I be where I am today? Most likely not. But I was 16 years old, just realized what my biggest talent was, and needed the tools to evolve it. I had no money, nor were my parents particularly wealthy considering my we were 7 kids and a single income, and I really don’t think my parents would have understood how significant buying a compiler for me would have been. I’m not sure I fully understood that myself at the time. There have been studies showing the piracy has a positive effect on society as a whole. I don’t doubt that given my own personal experience.

In my opinion, the proper response from society is realizing that we have a new situation enabled by modern technology. Trying to fight off pirates is perhaps a good idea around the coast of Somalia, but I don’t think there’s any government response necessary at all for the pirates here at home. We need a new mindset however with greater freedoms for individuals and more content available for free. There’s a precedent in the form of libraries. You have copyrighted material available there that anyone can read. If you’re a writer, your work may very well be read by people at the library, and this could perhaps reduce the number of books sold. This has not been a disaster for writers. In fact, one could argue that writers have benefitted from it, as more people get exposed to your work. I admit it was a long time since I was in a library, but even back in my school days there were CDs available you could listen to there too. I didn’t ruin the music industry. I don’t know if computer games have made it into any libraries these days, but perhaps in the future you’ll be able to try them out there. Although in a sense, Internet is replacing the library these days. You don’t have to find the right book on the library shelves, but a few keywords in Google and the stuff you require may readily available.

What about the producers then? Well, new situations require new business models. Companies stuck in the past will go under. No amount of legal action will prevent that. If you’re producing music on CD, your days are numbered. Optical media for music in 2009, seriously? The only solid approach to dealing with piracy is ensuring that the paid for experience is better than the pirated one. This basic concept appears to be really hard to grasp for some of the old folks in some corporate headquarters. There’s no technical solution that will prevent piracy. If you’re considering sophisticated DRM schemes, realize that you’re primarily hurting your paying customers, not the pirates. Once the cracked version is out there it’s going to be stripped of all the limitations, whereas the paying customer will have to live with the restrictions you imposed on the software. And seriously, it’s 2009 and we live in a globalized world, and Internet knows no country borders. Releasing any form of digital product at different times in different regions is a fundamentally broken business model. You can either wait until the product is available in your region, or you can download it and enjoy right away. Congratulations, you just created an incentive for people to pirate. It’s not rocket science. If you’re an artist, instead of seeing it as a threat, use Internet as a tool for marketing. It may be harder to sell the music these days, but instead of whining over that, consider yourself the product and the music your marketing. Provide the music for free and earn your money from concerts, T-shirts and other fan material. The new reality is that people will copy your stuff, so instead of making that a problem, the future belongs to those who are able to take advantage it.

[

16 comments |

Last comment by Warshade (2009-05-02 12:53:01) ]

Easter egg

Monday, April 13, 2009 | Permalink

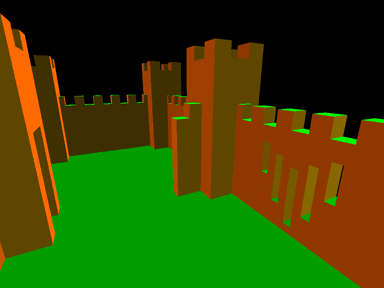

In the spirit of the season, here's my favorite easter egg of all time.

It's also from my favorite game of all time. Yep, that's Unreal, the original from 1998.

[

3 comments |

Last comment by Axel (2009-04-17 16:24:36) ]

OpenGL 3

Friday, April 10, 2009 | Permalink

In my framework work I've started adding OpenGL 3.x support. I was one of the angry voices about what happened with OpenGL 3.0 when it was released. It just didn't live up to what had been promised, in addition to being a whole year too late. While OpenGL 3.0 didn't clean up any of the legacy crap, OpenGL 3.1 finally does. A bunch of old garbage that were simply labelled deprecated in 3.0 have now actually been removed from OpenGL 3.1. As it looks now, OpenGL is on the right track, the question is just whether it can make up for lost time and keep up with Direct3D going forward.

However, along with the OpenGL 3.1 specification they also came up with the horrible GL_ARB_compatibility extension. I don't know what substance they were under the influence of while coming up with this brilliant idea. Basically this extension brings back all legacy crap that we finally got rid of.

This extension really should not exist. Please IHVs, I urge you to NOT implement it. Please don't. Or at the very least don't ship drivers with it enabled by default. If you're creating a 3.1 context, you're making a conscious decision not to use any of the legacy crap. Really, before venturing into OpenGL 3.1 land, you should've made the full transition to OpenGL 3.0. Fine, if a developer for some reason still needs legacy garbage during a transitional period while porting over the code to 3.1 he can enable it via a registry key or an IHV provided tool. But if you're shipping drivers with it enabled by default, you know what's going to happen. An important game or application is going to ship requiring the support of this extension, and all the work done on cleaning up the OpenGL API is going to be wasted. It would have to be supported forever and we're back at square one.

In my framework I'm going to use the WGL_CONTEXT_FORWARD_COMPATIBLE_BIT_ARB flag. This means that all legacy crap will be disabled. For instance calling glBegin()/glEnd() will be a nop. This will ensure that I'm sticking to modern rendering techniques. All new code should be using this. The only reason for not using it is if you're porting over some legacy code. The deprecation model that OpenGL 3.0 added is fundamentally good. It allows us to clean up the API while giving developers the ability upgrade their code. If done right the whole OpenGL ecosystem would modernize by necessity. This of course relies on Khronos not to poop all over the efforts with stuff like GL_ARB_compatibility.

[

11 comments |

Last comment by Anon (2009-04-13 18:12:15) ]

More pages: 1 ...

11 12 13 14 15 16 17

18 19 20 21 22 ...

31 ...

41 ...

48