More pages: 1 ...

9 10 11 12 13 14

15 16 17 18 19 ...

21 ...

31 ...

41 ...

48

Baking with Humus

Saturday, August 22, 2009 | Permalink

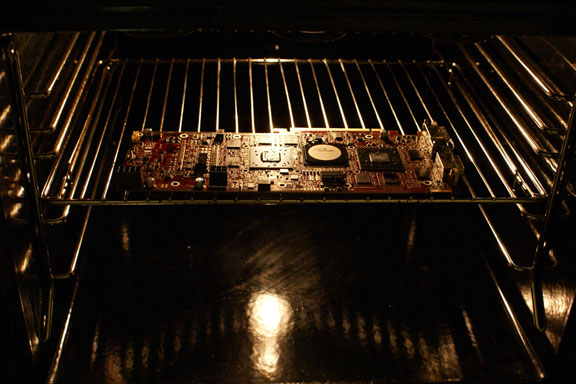

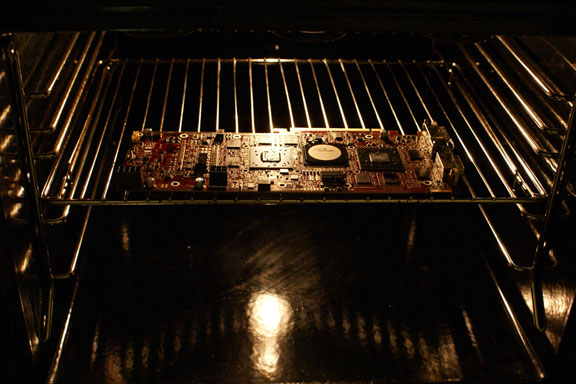

Today we'll be baking a working GPU.

What you need:

1) One broken GPU

2) A crazy mind

Verify the GPU is still broken.

Remove heatsink and all detachable parts from GPU. Put the GPU on a few supporting screws in the middle of the oven and bake at 200-275C until lightly brown on various plastic parts.

Let it cool gently in the oven. Reattach heatsink and other parts. Put into computer and boot it up. Verify that the card is now functional.

Whooha, you actually did that? Yep.

So what's the deal? Well, I read

a forum post where someone had resurrected a video card by putting it into the oven. Some people also claim to have resurrected their Xbox360 by putting it into a few towels and let it run for about 20 minutes. The reason why this sometimes works is that a leading reason for hardware failure is solder joints that crack over time. If you heat the device enough it will cause the solder joints to melt and reconnect. The melting point of commonly used solders are within reach for a regular household oven.

Some of you may recall I had

a broken 3870 X2. So I thought I should attempt this trick on it. Just to give me a sense of how well this would work I first attempted this on an old SiS AGP card I found in my closet. I didn't even know I owned one, heh. So I put it into the oven and turned it to 200C. It didn't even reach that temperature before I heard something fall. Turned out some component fell off.

Lesson learned was to not put the card upside down. Seems kinda obvious afterwards, but with melted solder it will of course fall off if it's hanging under the card with only the solder joint supporting it. Then I tried it on the 3870 X2. I had to heat it much further before the solders melted. I opened the oven and poked a solder joint now and then to see if it was still hard. I had to go all the way to 275C before it finally melted. I turned off the oven and let it cool gently in there for a couple of hours before taking it out. Then I looked very carefully at all the solder joints to verify that nothing had melted too much and made a short circuit. This is of course a very important step if you're attempting this trick. A short circuit could potentially damage your whole computer or cause a fire, so don't try this if you're unwilling to take any chances. Keep an eye on the card during the whole process and double check the results. If you see any signs of any solders having flowed away from its attachment point, you may consider not attempting to boot it up. Everything looked fine on my card though, but I did at least make a full backup of my important files to an external drive before putting it into the computer. Booting up the card it now appears to work. I've been gaming on it for a couple of hours with no problems so far.

[

24 comments |

Last comment by Mickey (2014-01-20 02:27:32) ]

Just Cause 2

Thursday, August 20, 2009 | Permalink

The

Just Cause 2 website just got online. Check it out!

[

0 comments ]

Tough times in the games industry

Thursday, August 13, 2009 | Permalink

Sad to see, but Swedish game developer GRIN has gone bankrupt and closed its doors. On a positive note,

their thank you letter ends with a "brb".

[

4 comments |

Last comment by mg (2009-08-13 11:09:18) ]

OpenGL 3.2

Saturday, August 8, 2009 | Permalink

Having been on vacation I'm a bit late to the party, but OpenGL 3.2 has been released and the specs are available

here.

I like the progress OpenGL has made lately and it looks like the API is fully recovering from the years where it was falling behind. If Khronos can keep up this pace OpenGL will remain a relevant API. I hope the people behind GLEW and GLEE get up to speed though because neither have implemented OpenGL 3.1 yet. In the past I rolled my own extension loading code, but was hoping to not have to do that and use libraries like the above. Looks like I might have to do my own in the future as well just to be able to use the latest and greatest.

[

26 comments |

Last comment by kRogue (2009-08-16 22:42:47) ]

Performance

Wednesday, July 22, 2009 | Permalink

The key to getting good performance is properly measuring it. This is something that every game developer should know. Far from every academic figure understands this though. When a paper is published on a particular rendering techniques it's important to me as a game developer to get a good picture of what the cost of using this technique is. It bothers me that the performance part of the majority of techical papers are poorly done. Take for instance

this paper on SSDO. This paper isn't necessarily worse than the rest, but just an example of common bad practice.

First of all, FPS is useless. Gamers like them, they often show up in benchmarks and so on, but game developers generally talk in milliseconds. Why? Because frames per second by definition includes everything in the frame, even things that are not core components of the rendering technique. For SSDO, which is a post-process technique, any time the GPU needs for buffer clears and main scene rendering etc is irrelevant. But it's part of the fps number, and you don't know how much of the fps number it is. Therefore a claimed overhead of 2.4% of SSDO vs. SSAO, for instance, is meaningless. The SSDO part may actually be twice as costly as SSAO, but large overhead in other parts of the scene rendering could hide this fact. For instance if the GPU needs 12 milliseconds to complete main scene rendering, and 0.5ms to complete SSAO, that'll give you 12.5ms in total for a framerate of 80 fps. If SSDO increases the cost to 1ms, which would then be twice that of SSAO, the total frame runs in 13ms for a framerate of 77 fps. 4% higher cost doesn't seem bad, but it's 0.5ms vs. 1ms that's relevant to me, which would be twice as expensive and thus paint a totally different picture of the cost/quality of it versus SSAO. Not that these numbers are likely to be true for SSDO though. This paper was kind enough to mention a "pure rendering" number (124fps at 1600x1200), which is rare to see, so at least in this can we can reverse engineer some actual useful numbers from the useless fps table, although only for the 1600x1200 resolution.

So main scene rendering is 1000/124 = 8.1ms, which is definitely not an insignificant number.

With SSAO it's 1000/24.8ms = 40.3ms

With SSDO it's 1000/24.2ms = 41.3ms

So the cost of SSAO is 40.3ms - 8.1ms = 33.2ms, and for SSDO it's 41.3ms - 8.1ms = 34.2ms

Therefore, SSDO is actually 3% more costly than SSAO, rather than 2.4%. Still pretty decent, if you don't consider that their SSAO implementation obviously is much slower than any SSAO implementation ever used in any game. 33.2ms means it doesn't matter how fast the rest of the scene is, you'll still never go above 30fps. Clearly not useful in games.

Another reason that FPS is a poor measure is that it skews the perception of what's expensive and not. If you have a game running at 20fps and you add an additional 1ms worth of work, you're now running at 19.6fps, which may not look like you really affected performance all that much. If you have a game running at 100fps and add the exact same 1ms workload you're now running at 90.9fps, a substantially larger decrease in performance both in absolute fps numbers and as a percentage.

Percentage numbers btw are also useless generally speaking. When viewed in isolation to compare two techniques and you compare the actual time cost of that technique only, it can be a valid comparison. Like how many percent costlier SSDO is vs. SSAO (when eliminating the rest of the rendering cost from the equation). But when comparing optimizations or the cost of adding new features it's misleading. Assume you have a game running at 50fps, which is a frame time of 20ms. You find an optimization that eliminates 4ms. You're now running at 16ms (62.5fps). The framerate increased 25%, and the frame time went down 20%. Already there it's a bit unclear what number to quote. Gamers would probably quote 25% and game developers -20% (unless the game developers wants to brag, which is not entirely an unlikely scenario

). Now lets say the next day another developer finds another optimization that shaves off another 4ms. The game now runs at 12ms (83.3fps). The framerate went up 33% and the frame time was reduced by 25%. By looking at the numbers it would appear as the second developer did a better optimization. However, they are both equally good since they shaved off the same amount of work, and had the second developer done his optimization first he would instead have gotten the lower score.

Another thing to note is that it's never valid to average framerate numbers. If you render three frames at 4ms, 4ms and 100ms you get an average framerate of (1000/4 + 1000/4 + 1000/100) / 3 = 170fps. If you render at 10ms, 10ms and 10ms you get an average framerate of 1000/10 = 100fps. Not only does the latter complete in just 30ms versus 108ms, but it also did so hitch free, yet gets a lower average framerate.

The only really useful number is time taken. Framerate can be mentioned as a convenience, but any performance chapter in technical papers should write their results in milliseconds (or microseconds if you so prefer). There are really no excuses for using fps. These days there are excellent tools for measuring performance, so if the researcher would just run his little app through PIX he could separate the relevant cost from everything else and provide the reader with accurate numbers.

[

10 comments |

Last comment by Rob L. (2009-07-26 21:35:10) ]

IGN: The 10 Best Game Engines of This Generation

Wednesday, July 15, 2009 | Permalink

Well, Avalanche Engine is

one of them.

[

6 comments |

Last comment by Agadoul (2009-07-23 10:10:19) ]

Bullshit!

Monday, July 13, 2009 | Permalink

I'm a big fan of the

Penn & Teller Bullshit! show. They usually do a great job at exposing all kinds of bullshit for what it really is. The latest episode takes a jab at everything that video games are blamed for, despite lack of scientic evidence backing up such claims. Well worth watching.

Part 1 Part 2 Part 3[

10 comments |

Last comment by Rob L. (2009-07-25 11:58:06) ]

Yet another particle trimmer update

Wednesday, July 8, 2009 | Permalink

In the last release I messed up atlases again, so now that's fixed.

I also added an option to optimize vertex ordering in the spirit of

these findings. You provide your index buffer and it outputs the vertices in the order that minimizes the internal edges. This option will probably only have a measurable effect on small particles though. But it's a trivial thing to compute, so it doesn't hurt to do it.

Finally I've changed the input options to a more standard and practical format as the number of options have increased, so now you don't need to provide all parameters just because you wanted to change the last one, but instead you can for instance do this to only change maximum hull size:

> ParticleTrimmer imagefile 4 8 -h 40

With these changes I guess the tool is pretty much "final", unless I come up with a solution to the performance problem other than by reducing the number of edges in the original convex hull to a managable number.

[

0 comments ]

More pages: 1 ...

9 10 11 12 13 14

15 16 17 18 19 ...

21 ...

31 ...

41 ...

48